Grok 4.1 Fast Rockets to the Top: Inside xAI’s Race Toward Grok 4.2 and Grok 5

Grok 4.1 Fast and the Road to Grok 5: How xAI Is Chasing the Agentic Frontier

xAI’s Grok 4.1 Fast has quickly climbed to the top of competitive “agentic” AI leaderboards, promising a blend of speed, real‑time X (Twitter) integration, web browsing, and code execution tools. With Elon Musk signaling that Grok 4.2 (nicknamed “4.20”) should arrive by Christmas and Grok 5 targeted for the first quarter of 2026, xAI is positioning Grok as a direct challenger to frontier models from OpenAI, Anthropic, Google, and others. This article unpacks what is known about Grok 4.1 Fast, how it fits into the agentic AI ecosystem, and what to expect from Grok 4.2 and Grok 5.

Mission Overview: What Is Grok and Why Does It Matter?

Grok is xAI’s family of large language models (LLMs) designed to be witty, opinionated, and tightly integrated with the X ecosystem. While early Grok models focused on conversational tasks, the 4.x series is explicitly targeting “agentic” behavior: the ability to plan, use tools, browse the web, and perform multi‑step tasks autonomously or semi‑autonomously.

The release of Grok 4.1 Fast adds a new dimension: a latency‑optimized variant that trades a small amount of capability for significantly higher speed and lower cost per request, making it the default candidate for:

- Interactive chat and customer support scenarios

- High‑frequency agent loops (planning–acting–reflecting cycles)

- Real‑time social data analysis via X

- Lightweight code generation and debugging

In parallel, xAI is signaling an aggressive roadmap:

- Grok 4.2 (4.20): targeted for release by Christmas, with expected gains in reasoning and multimodal capabilities.

- Grok 5: targeted for Q1 2026, expected to compete directly with the most advanced frontier models on complex reasoning, multi‑modal understanding, and robust real‑world tool use.

Agentic AI and Leaderboards: Why Grok 4.1 Fast Is Turning Heads

“Agentic AI” refers to LLM-based systems that do more than respond to single prompts: they decompose tasks, call tools, maintain long‑term context, and pursue goals via autonomous loops. Recent months have seen the emergence of agentic leaderboards that evaluate how well models perform on:

- Complex multi‑step coding tasks (e.g., implementing features in a codebase)

- Web research workflows (search–evaluate–synthesize)

- Data analysis and visualization via code execution

- Task planning and execution across multiple tools/APIs

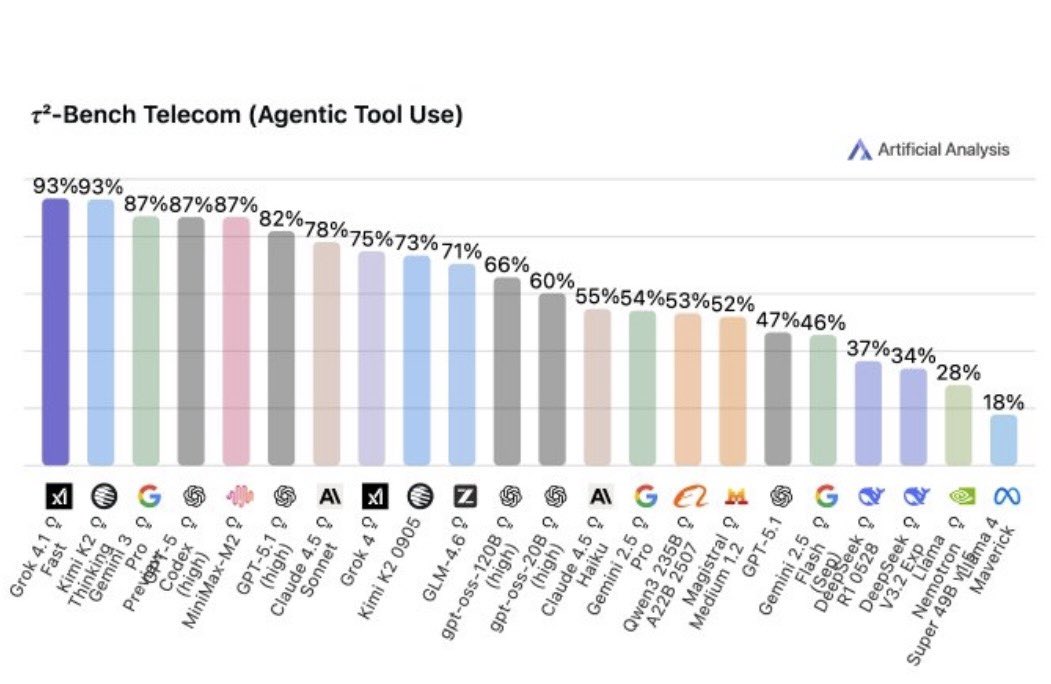

Grok 4.1 Fast reportedly ranks near or at the top of several such leaderboards, particularly those that:

- Weight speed and tool-use efficiency over raw single‑prompt benchmark scores.

- Focus on real‑world coding tasks rather than purely synthetic question-answer benchmarks.

This is strategically important: the real value in agents often lies in how quickly and reliably they can iterate on tools, not only in their “IQ” on static exams like MMLU or GPQA. A slightly less capable model that is 2–4× faster and cheaper can win in production, and Grok 4.1 Fast is clearly aimed at that sweet spot.

Under the Hood: Architecture Trends Behind Grok 4.1 Fast

xAI has not fully disclosed Grok 4.1’s architecture at a granular level, but its capabilities and release strategy strongly suggest a modern transformer-based LLM with many of the now-standard frontier techniques. Based on public information and industry trends, the following features are likely:

- Decoder-only transformer backbone optimized for autoregressive text and code generation.

- Extensive code pre‑training to support strong performance on software engineering and tool calling.

- Mixture-of-Experts (MoE) or variant for balancing scaling and inference cost, or at minimum, heavy inference-time optimization (quantization, compile‑time graph optimization, etc.).

- Large context window (tens to hundreds of thousands of tokens) for long conversations and multi‑file code context.

- Reinforcement Learning from Human Feedback (RLHF) or similar preference‑optimization to align responses with xAI’s conversational style and safety constraints.

“Fast” variants in modern LLM families typically use:

- Smaller active parameter counts at inference time.

- Lower precision (e.g., 4‑bit or 8‑bit weight quantization with minimal quality loss).

- Optimized attention kernels and model graph compilation.

- Specialized serving on GPUs/TPUs with aggressive batching and caching strategies.

Grok 4.1 Fast appears to sit in this category: a latency‑optimized sibling of a more powerful “full” Grok 4.x model, tuned for agent loops and high‑volume usage across the X platform and third‑party integrations.

Real-Time X Integration: Turning the Social Graph into a Data Firehose

One of Grok’s marquee differentiators is deep, real‑time access to data from X. While most foundation models are trained on static snapshots of the web, Grok is explicitly marketed as being able to answer questions about live conversations, trends, and events on the platform.

This real‑time integration enables applications such as:

- Live trend analysis for finance, politics, sports, and entertainment.

- Reputation and sentiment tracking for brands and public figures.

- Event summarization for breaking news, including threaded explanations and cross‑referencing sources.

- Social listening agents that monitor specific keywords or accounts and produce concise, contextualized briefs.

Technically, this implies that Grok 4.1 Fast is frequently paired with:

- An internal retrieval pipeline over recent X posts and metadata.

- Filtering and ranking layers to remove spam, low‑quality, or policy‑violating content.

- Prompt orchestration that injects retrieved X snippets into Grok’s context so the model can reason over them.

From a research perspective, Grok becomes a hybrid:

a static LLM for long‑term knowledge and reasoning, plus a real‑time retrieval system over X for fresh information.

Web Browsing and Tool Use: Grok as a General-Purpose Agent

Beyond X data, Grok 4.1 Fast supports general web browsing and a growing set of tools, placing it squarely in the class of agentic models like OpenAI’s GPT‑4o with browsing, Anthropic’s Claude with tools, and Google’s Gemini with extensions.

A typical Grok 4.1 agent loop might follow this pattern:

- Interpret the user’s request and decide whether tools are needed (search, code execution, database queries, etc.).

- Call a tool with structured arguments, e.g., a web search query or an HTTP request.

- Parse and summarize the results, possibly calling more tools or refining the search.

- Produce a final answer that combines model knowledge, retrieved data, and code outputs.

Grok’s code tools further extend this loop:

- Generate code in Python or other languages.

- Execute the code in a constrained environment.

- Inspect errors or outputs.

- Iterate until an acceptable result or timeout is reached.

For tasks like data analysis, algorithm prototyping, or small automation scripts, this can effectively turn Grok into a just‑in‑time programming assistant capable of self‑debugging. The “Fast” variant’s lower latency is particularly important here, as each agent loop can involve dozens of model calls.

Performance and Benchmarks: Speed vs. Raw IQ

Public, third‑party benchmarks for Grok 4.1 Fast are still limited compared to more mature models, but the general pattern is emerging:

- Competent on coding and reasoning tasks, often near top-tier closed models but with some trade‑offs in edge cases.

- Very strong on agentic leaderboards, where tool‑use, latency, and stability are explicitly measured.

- Competitive on general knowledge and conversational quality, especially when X integration is relevant.

A useful mental model is to think of Grok 4.1 Fast as:

A high‑end production workhorse that prioritizes responsiveness and cost‑efficiency, with enough intelligence to handle complex multi‑step tasks when coupled with tools and retrieval.

For many organizations, this is more attractive than deploying only the most expensive and slowest frontier model for every request. Instead, a routing layer can:

- Use Grok 4.1 Fast for 80–90% of everyday tasks.

- Escalate the hardest reasoning problems to a future Grok 5 or competitor model.

Roadmap: From Grok 4.1 Fast to Grok 4.2 and Grok 5

Elon Musk has publicly suggested a rapid cadence for Grok releases:

- Grok 4.2 (4.20): targeted for release by Christmas.

- Grok 5: targeted for Q1 2026.

While technical details remain under wraps, based on industry trends we can reasonably expect:

Likely Improvements in Grok 4.2

- Stronger reasoning on math, coding, and logic-heavy tasks.

- Improved multimodality, potentially better handling of images, diagrams, and complex documents.

- Richer tool ecosystem, with more first‑party tools and easier third‑party integration.

- Refined safety and style controls to balance Grok’s signature humor with responsible output.

What Grok 5 Could Bring

Grok 5 is the more ambitious milestone, potentially representing:

- A larger or more efficient backbone model, possibly combining dense and sparse (MoE) layers.

- Substantially better long‑horizon planning and memory, crucial for advanced agents.

- Deeper integration with X, Tesla, and other Musk‑affiliated ecosystems (subject to regulatory and privacy constraints).

- Enhanced multi‑modal capabilities, including video, audio, and richer sensor data.

If delivered on schedule, Grok 5 would arrive in an intensely competitive window: OpenAI, Anthropic, Google, Meta, and others are all iterating rapidly. Success will depend not only on raw model quality but also on ecosystem depth—APIs, tooling, documentation, and integration paths.

Key Application Domains for Grok 4.1 Fast

With its combination of speed, agentic abilities, and real‑time X access, Grok 4.1 Fast is particularly well‑suited to several domains:

1. Social Intelligence and Media Monitoring

Organizations can use Grok as a “social intelligence analyst” that:

- Tracks brand mentions and sentiment on X.

- Summarizes trending topics and their likely drivers.

- Provides early warning for emerging crises or viral content.

2. Developer Productivity and Code Agents

With code tools and fast iteration, Grok 4.1 Fast can act as:

- An in‑IDE coding assistant for generating functions, tests, and documentation.

- A code‑review sidekick suggesting refactors and catching common bugs.

- A semi‑autonomous agent capable of applying small patches, running tests, and reporting results.

3. Research Assistants and Analysts

Combining web browsing, X data, and code tools, Grok can:

- Collect and synthesize information from scientific articles, news, and social media.

- Run basic statistical analyses or simulations via code execution.

- Generate reports, slide outlines, and executive summaries.

4. Customer Support and Operations

In enterprise settings, Grok 4.1 Fast’s responsiveness is well‑matched to:

- Chatbots that can access knowledge bases, ticket systems, and CRM tools.

- Internal support helpers that resolve IT or HR queries.

- Operational copilots that monitor dashboards and raise alerts.

Safety, Alignment, and Responsible Deployment

As Grok’s capabilities expand, so do the stakes for safety and alignment. xAI, like its peers, faces several intertwined challenges:

- Misinformation and Real‑Time Data: With deep access to X, Grok must learn to separate signal from noise, deal with coordinated disinformation, and avoid amplifying harmful content.

- Tool Misuse: Code execution and web access can be abused if not carefully sandboxed and filtered, e.g., for attempting to exploit systems or generate unsafe instructions.

- Privacy and Data Protection: Integrating user data from X and potentially other sources demands strict access control, anonymization, and compliance with regional regulations.

- Bias and Fairness: Training on large web‑scale datasets and social media introduces biases that must be identified and mitigated.

Addressing these issues typically involves multiple layers:

- Safety‑tuned model weights (via RLHF or other preference learning).

- Input and output filters for sensitive content.

- Tool‑level safeguards (e.g., constrained execution environments, rate limits, and sensitive‑action approvals).

- Transparent logging and auditability for high‑risk deployments.

As Grok 4.2 and Grok 5 emerge, the quality of these safety layers will be as important as the raw intelligence of the underlying models, particularly as agentic behavior becomes more autonomous.

Competitive Landscape: How Grok Stacks Up

Grok 4.1 Fast enters a crowded and rapidly evolving market. Major competitors include:

- OpenAI with the GPT‑4.x family and GPT‑o1 (reasoning-oriented) models.

- Anthropic with Claude 3.x and evolving tool‑using agents.

- Google DeepMind with Gemini models across different sizes.

- Meta with open‑weight Llama models that power a vibrant ecosystem.

Grok’s distinct advantages include:

- Native X integration for real‑time social data.

- Tight coupling with Musk’s broader ecosystem (e.g., potential future links with Tesla or SpaceX data).

- A strong focus on agents instead of only chat or static Q&A.

Its key challenges are:

- Matching or surpassing top-tier reasoning and benchmark scores from existing frontier models.

- Building a robust developer ecosystem with SDKs, documentation, and examples.

- Convincing enterprises to adopt Grok in regulated environments where incumbents may already have a foothold.

Technical and Organizational Challenges Ahead

Delivering on the Grok roadmap will require xAI to navigate substantial challenges:

1. Scaling Compute and Infrastructure

Training and serving frontier LLMs demands vast GPU clusters, high‑speed networking, and sophisticated scheduling. Balancing research runs, productization, and public traffic on shared infrastructure is a non‑trivial engineering problem.

2. Data Curation and Freshness

Grok’s unique strength—real‑time access to X—also creates a moving target for data pipelines. Ensuring high‑quality, well‑labeled data while avoiding overfitting to recent controversies or spam requires continual refinement.

3. Tooling and Ecosystem Maturity

For many developers, the choice of model is driven less by raw capability and more by:

- Stability and versioning guarantees.

- High‑quality documentation and code samples.

- SDKs and libraries for popular languages and frameworks.

- Monitoring, observability, and billing transparency.

Building this around Grok 4.1 Fast and its successors will be essential to convert hype into sustained adoption.

4. Governance and Public Trust

Given the politicized nature of social media platforms, any AI tightly coupled to X must contend with scrutiny over:

- Perceived bias or favoritism in content interpretation.

- Handling of political and sensitive topics.

- Transparency about how data from X is used and stored.

Clear governance structures, published safety practices, and independent evaluations will be crucial to strengthen trust in Grok‑powered systems.

Conclusion: Grok’s Fast Lane to the Agentic Future

Grok 4.1 Fast marks a significant step in xAI’s attempt to carve out a distinct identity in the AI landscape. By prioritizing speed, tool use, and real‑time X integration, xAI is betting that the future of AI is not just smarter models but more capable agents—systems that can act, adapt, and collaborate with humans on complex workflows.

The coming year will test whether this bet pays off. If Grok 4.2 arrives on schedule with meaningful capability gains, and Grok 5 in early 2026 can stand shoulder‑to‑shoulder with the best frontier models, xAI will have firmly established itself as a serious competitor. Regardless of competitive outcomes, the rapid evolution of Grok underscores a broader trend: agentic AI is moving from research prototypes to production reality, and models like Grok 4.1 Fast are at the forefront of that shift.

For developers, researchers, and businesses, the practical takeaway is clear: start designing workflows, applications, and governance frameworks around agents, not just chatbots. Grok’s trajectory is a reminder that the next generation of AI systems will be less about single prompts and more about continuous, tool‑rich collaboration with machine intelligence.

References / Sources

- xAI – Official website and Grok documentation.

https://x.ai - X (Twitter) Engineering and xAI announcements, accessed November 2025.

https://twitter.com/xai - NextBigFuture coverage of Grok 4.1 Fast and roadmap comments from Elon Musk, November 2025.

https://www.nextbigfuture.com - Vaswani, A. et al. (2017). "Attention Is All You Need." Advances in Neural Information Processing Systems.

https://arxiv.org/abs/1706.03762 - OpenAI, Anthropic, Google DeepMind, and Meta technical reports on large language models and tool‑using agents, 2023–2025.

https://openai.com/research, https://www.anthropic.com/research, https://deepmind.google/research, https://ai.meta.com/research/ - W3C Web Accessibility Initiative – WCAG 2.2 Guidelines.

https://www.w3.org/TR/WCAG22/