Grok 4.1 Fast: How xAI’s New Model Raced to the Top of Agentic AI Leaderboards

Grok 4.1 Fast and the Race to Agentic AI: Inside xAI’s Rapid Model Roadmap

xAI’s Grok 4.1 Fast has rapidly climbed to the top of agentic AI leaderboards by combining high-speed inference with real-time access to X data, web browsing, and integrated code tools. This article explores what makes Grok 4.1 Fast distinctive, how it fits into xAI’s roadmap toward Grok 4.2 by Christmas and Grok 5 in early 2026, and why these developments matter for the future of autonomous AI agents.

With competition intensifying among frontier models from OpenAI, Anthropic, Google, Meta, and others, xAI is positioning Grok not just as a conversational assistant but as an “agentic” platform: a system that can perceive, plan, and act on behalf of users across the web and code ecosystems. Grok 4.1 Fast is tuned to prioritize latency and tool-use efficiency, a key requirement for agent frameworks that must orchestrate many rapid calls to language models.

This piece synthesizes public information up to November 23, 2025, including xAI announcements, independent benchmarks, and broader AI research trends, to give a technically grounded overview suitable for readers who follow AI research, MLOps, or product development.

Mission Overview: What Is Grok 4.1 Fast?

Grok 4.1 Fast is a high-performance variant in the Grok 4.x family designed to trade a small amount of peak reasoning capability for:

- Much lower latency per request

- Higher throughput (more requests per second per GPU)

- Cost-efficiency for agent-style workloads

- Tight integration with external tools and live data streams

In practice, that means Grok 4.1 Fast is engineered for scenarios where the model is called repeatedly in small bursts—such as:

- Multi-step web-browsing agents that must fetch, summarize, and cross-check pages quickly

- Realtime dashboards powered by X data streams

- Code-assistance loops where many short completions are preferred over a few giant ones

- Autonomous research agents that iteratively query, reason, and plan

xAI has emphasized three integrative capabilities that distinguish Grok 4.1 Fast in this agentic context:

- Realtime X data: Direct access to up-to-the-minute content and engagement signals from the X platform.

- Web browsing: The ability to pull in external documents, navigate pages, and ground outputs in current information.

- Code tools: Structured interaction with code execution environments and repositories, supporting not just code generation but debugging and refactoring.

While xAI has not released full technical specifications—such as parameter count, exact training corpus composition, or architecture diagrams—public performance and usage patterns suggest a frontier-class transformer-based model with aggressive optimization for inference efficiency.

Leading the Agentic AI Leaderboard

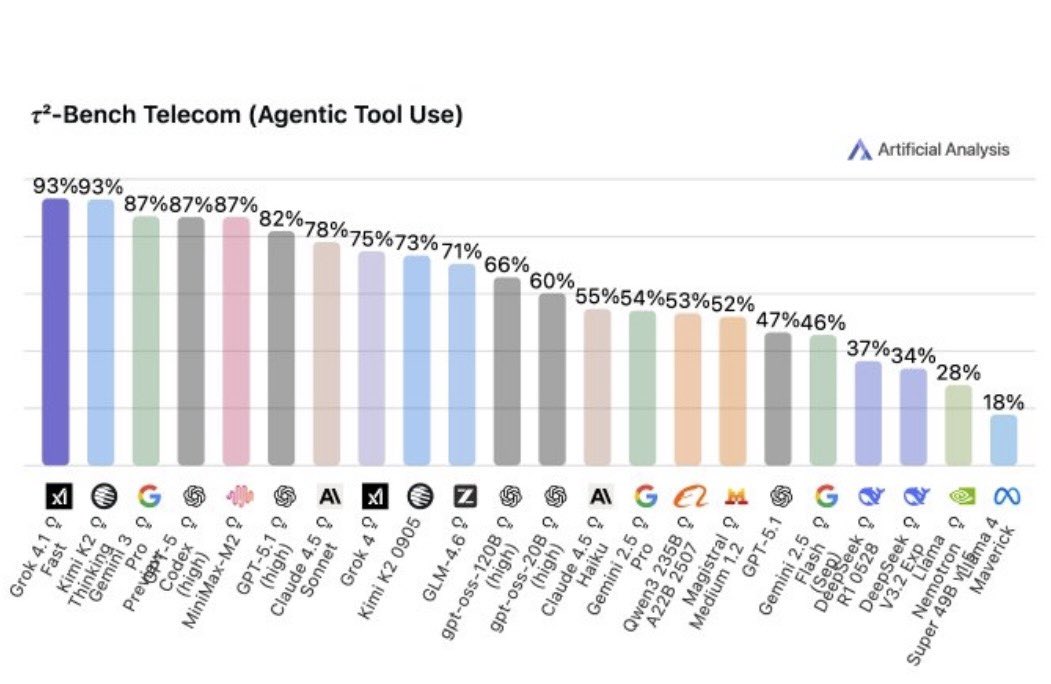

xAI and independent evaluators have highlighted Grok 4.1 Fast’s strong performance on “agentic” benchmarks—tests where the model must coordinate tools, plan multi-step actions, and adapt to changing context rather than simply answer static questions.

In agentic evaluations, the key metric is often not the single-response IQ of the model, but how well it can orchestrate many small, tool-augmented steps to accomplish a larger task reliably and quickly.

Agent leaderboards typically measure:

- Task-completion rate: How often an agent successfully finishes a complex multi-step objective.

- Tool-use correctness: Whether calls to search, browsing, or code tools are well-formed and semantically appropriate.

- Latency per step: Critical for usability; a slow agent is effectively unusable in production workflows.

- Resource efficiency: How many calls, tokens, and external API hits are used per completed task.

Grok 4.1 Fast’s advantage is not only in raw reasoning capability, which is competitive with other frontier models, but in how efficiently it can iterate inside agent loops. Latency decreases compound when:

- Every tool call is 20–40% faster

- The model needs fewer tokens to maintain context

- The system uses shorter, more focused prompts optimized for the model

These properties make Grok 4.1 Fast an attractive “default engine” for developers building sophisticated AI workflows on top of xAI’s stack.

Roadmap: Grok 4.2 by Christmas and Grok 5 in Q1 2026

Elon Musk and xAI have signaled an aggressive roadmap:

- Grok 4.20 (4.2) by Christmas: A holiday release focusing on improved reasoning depth, safety alignment, and perhaps more robust multi-modal capabilities while maintaining speed.

- Grok 5 in Q1 2026: A major next-generation model that is expected to compete with or exceed the strongest general-purpose models in capabilities such as long-context reasoning, code synthesis, and multi-agent coordination.

While concrete architectures are not yet public, a reasonable interpretation based on industry patterns is:

- Grok 4.x: An optimized family based on a relatively stable core architecture, with improvements in training data, fine-tuning, tool integration, and inference efficiency.

- Grok 5: A larger architectural jump—potentially involving mixture-of-experts (MoE) techniques, longer context windows, or improved multi-modal integration (text, images, possibly video and structured data).

The compressed timeline—new major model within roughly one quarter—is consistent with:

- Large-scale GPU capacity at xAI’s disposal (notably through xAI’s close ties to X and related infrastructure investments).

- Re-use of existing data pipelines and tooling from the Grok 4 series.

- Layered training strategies, where foundational capabilities are built on earlier models with heavy use of distillation and transfer learning.

From an industry perspective, the accelerated roadmap underscores how quickly the “frontier” is moving. Models that are state-of-the-art in mid-2025 may be surpassed within months, shifting the focus from static capability comparisons to continuous systems-level improvement, including data freshness, tool ecosystems, and hardware efficiency.

Under the Hood: Likely Architecture and Optimization Strategies

xAI has not fully disclosed Grok 4.1 Fast’s internals, but we can infer several design choices from observable behavior and general trends in large language model (LLM) research:

- Transformer-based core: A causal decoder-only transformer, likely leveraging architectural refinements such as grouped-query attention (GQA) or multi-head attention optimizations for speed.

- Context length: Support for extended contexts to power browsing and multi-step agents—potentially 128k tokens or more, using efficient attention variants such as sliding-window or hierarchical attention.

- Inference-time optimization: Techniques like KV-cache reuse, quantization-aware training, and CUDA kernel fusion to maximize GPU utilization.

- Tool-call grammar: Structured output formats (e.g., JSON-based tool calls) learned via supervised fine-tuning and reinforcement learning from human and synthetic feedback.

- System-level routing: Potential use of smaller expert models for simpler tasks (classification, short summarization) alongside Grok 4.1 Fast for complex tool orchestration.

The “Fast” designation likely implies a mix of:

- Moderate parameter count relative to a “Max” model: Enough capacity for strong reasoning, but small enough to achieve low latency on common GPU hardware.

- High-quality distillation: A training process where a larger, more capable teacher model guides the Fast variant, preserving much of the intelligence in a smaller footprint.

- Expert prompt engineering baked into system prompts: System-level instructions that compress what otherwise would be large, verbose prompts into concise, model-optimized directives.

For developers and researchers, the key takeaway is that performance in agentic workloads is not just about raw model scale; it is the combined result of architecture, inference optimization, and tight integration with tools and data sources.

Realtime X Data, Web Browsing, and Tool Integration

One of Grok 4.1 Fast’s headline differentiators is realtime access to X data, combined with a general-purpose web browser and code tools. This trio turns the model into a bridge between:

- Streaming social signals (X timelines, trends, discussions)

- Static and semi-static knowledge (web pages, documentation, research articles)

- Executable environments (code, scripts, data pipelines)

Architecturally, tool integration typically involves:

- The model generating structured “tool call” outputs indicating which tool it wants to use and with what parameters.

- An orchestrator or runtime environment that executes the tool call (e.g., performing an HTTP request, running a code snippet, querying X APIs).

- Feeding the tool’s result back into the model as new context (often tagged as tool output).

- The model updating its plan and possibly issuing further tool calls until the user’s goal is satisfied.

Realtime X integration grants Grok 4.1 Fast:

- Freshness: Answers can reference events from minutes or seconds ago.

- Sentiment and trend awareness: Useful for media monitoring, market sentiment analysis, and social research.

- Synthetic data generation: Signals from X can also inform fine-tuning and alignment by providing rich examples of human discourse, including edge cases.

However, this also introduces challenges: misinformation, biased sampling, and content moderation at scale. Aligning the model so that it can consume noisy, sometimes adversarial content without amplifying harm is a central problem in real-world deployment.

Agentic Patterns: How Grok 4.1 Fast Is Used in Practice

Although specific customer deployments are often proprietary, we can outline typical agentic patterns that leverage Grok 4.1 Fast:

1. Research and Synthesis Agents

These agents:

- Take a high-level research question (e.g., “Compare emerging fusion startups and their funding rounds”).

- Plan a multi-step search, browsing relevant sites, filings, and X discussions.

- Extract and normalize data (names, dates, funding amounts, technical claims).

- Cross-check sources and flag inconsistencies.

- Produce a structured report or knowledge graph.

2. Code-Aware Operations Assistants

Here, Grok 4.1 Fast is wired into:

- Version control systems (GitHub/GitLab)

- CI/CD logs and observability dashboards

- Issue trackers and documentation

It can:

- Diagnose failing builds by highlighting suspicious commits and error messages.

- Generate and test candidate patches.

- Explain system behavior in natural language for on-call engineers.

3. Market and Media Intelligence

Using realtime X data and web browsing, agents can:

- Track how different narratives evolve around specific companies, technologies, or political issues.

- Segment audiences by topic, sentiment, or engagement patterns.

- Alert users to qualitatively novel events—e.g., “first time this keyword appears in a major outlet.”

In all these cases, Grok 4.1 Fast acts as the central reasoning node, with speed and tool competence directly translating into better user experience and system reliability.

Scientific and Technological Significance

Grok 4.1 Fast is part of a broader paradigm shift from “static chatbots” to “agentic systems.” This shift has multiple scientific and technological implications:

1. From Benchmarks to Behaviors

Traditional model evaluation emphasizes static benchmarks (MMLU, GSM8K, HumanEval, etc.). Agentic systems foreground:

- Non-myopic planning: Can the model structure long-term strategies rather than just local, myopic steps?

- Tool selection: Can it choose when not to use tools, avoiding unnecessary overhead?

- Adaptation: Does it refine its approach as it learns more from browsing and code execution?

This pushes evaluation research toward more complex, dynamic environments.

2. Human–AI Collaboration Patterns

Fast, capable agents change how humans orchestrate work:

- Humans define goals, constraints, and success criteria.

- Agents explore solution spaces, test hypotheses, and report back.

- Humans audit, correct, and refine rather than manually performing every subtask.

This division of labor is particularly valuable in complex technical domains where exploration is time-consuming but evaluation by domain experts remains essential.

3. Data-Centric AI and Live Environments

Realtime integration with X makes Grok a key case study in deploying models that live in constantly changing data environments:

- Models must remain calibrated as distributions drift.

- Safety filters must detect and mitigate harmful or misleading content on the fly.

- Feedback loops—where model outputs influence future X discourse—must be monitored to avoid runaway amplification of errors or biases.

These dynamics make Grok 4.1 Fast relevant not only to engineers and product teams, but also to researchers in machine learning, human–computer interaction, and AI governance.

Key Challenges: Safety, Reliability, and Governance

Powerful agentic systems bring substantial risks alongside their capabilities. Grok 4.1 Fast is no exception. Major challenge areas include:

1. Hallucinations and Over-Confidence

Even when connected to the web, models can:

- Misinterpret sources

- Cherry-pick supporting evidence

- Fill gaps with plausibly sounding but incorrect details

To mitigate this, xAI and others employ:

- Source attribution: Explicit citations to external content.

- Uncertainty estimation: Encouraging the model to state confidence levels and offer caveats.

- Verification loops: Having secondary checks or models validate critical outputs.

2. Misuse and Abuse

Any powerful model can be misused, for example, to generate misleading narratives, manipulate public opinion, or automate low-level cyber operations. Responsible deployment of Grok 4.1 Fast requires:

- Content filters and guardrails that block obviously harmful requests.

- Rate limiting and auditing for sensitive tool capabilities (e.g., automated mass messaging).

- Clear policies and enforcement mechanisms against abusive use cases.

3. Bias and Representation

Training on large-scale web and social media data can encode biases about:

- Demographics (race, gender, geography, etc.)

- Political positions and ideological framings

- What topics receive attention or are considered “normal”

Addressing these issues involves:

- Careful dataset curation and debiasing techniques.

- Post-hoc alignment through reinforcement learning from human feedback (RLHF) and related methods.

- Transparency about limitations and failure modes.

4. Evaluation of Agentic Systems

Evaluating an agent, not just a model, is complex. It involves:

- End-to-end task benchmarks that include real web environments.

- Long-horizon tests where failures may only appear after many steps.

- Human-in-the-loop trials where users interact with agents under realistic conditions.

For research and governance communities, Grok 4.1 Fast and its successors provide a concrete context to develop such evaluation frameworks.

Ecosystem and Competitive Landscape

Grok 4.1 Fast enters a crowded field of frontier models with agent ambitions, including:

- OpenAI’s GPT-4.1 and successor models with tools and Agents API.

- Anthropic’s Claude 3.x family with function calling and long-context reasoning.

- Google’s Gemini 2.x models tightly integrated with Search and Workspace.

- Meta’s open-weight Llama series, heavily used in self-hosted agent stacks.

xAI’s distinctive advantages center on:

- Deep integration with X: Realtime social graph data and distribution channels.

- Emphasis on speed: Grok 4.1 Fast is explicitly tuned for low-latency agentic use.

- Hardware and scaling commitments: Public statements emphasize large GPU investments and rapid iteration.

For developers and organizations deciding between ecosystems, considerations include:

- API stability and pricing

- Latency and throughput guarantees

- Tooling and SDK maturity

- Compliance, data residency, and security requirements

- Integration with existing data and productivity stacks (e.g., cloud providers, office suites)

The competition is likely to drive rapid improvements across all providers, benefiting end users and accelerating innovation in both core models and agent frameworks.

Looking Ahead: Grok 5 and Beyond

The planned launch of Grok 5 in Q1 2026 suggests that Grok 4.1 Fast is a stepping stone toward a more ambitious vision. Based on current research trajectories, we can anticipate several likely features of Grok 5 and its contemporaries:

- Richer multi-modality: More seamless handling of text, images, diagrams, and possibly time-series or audio, enabling agents to reason across diverse data formats.

- Longer and more reliable context windows: Better support for book-length documents, large codebases, or extended conversations without degradation.

- Improved meta-cognition: Models that are better at self-critiquing, revising, and deferring when uncertain.

- Multi-agent coordination: Systems where multiple specialized agents collaborate, negotiate, and divide work under a supervising orchestrator.

- Stronger safety frameworks: More robust techniques for aligning behavior in open-ended, real-world environments with complex norms and regulations.

Grok 4.1 Fast’s success on agentic leaderboards and in developer adoption will help shape:

- Which usage patterns xAI optimizes further.

- Where users experience friction or reliability issues.

- What governance mechanisms prove necessary in practice.

These lessons will inform the design and deployment of Grok 4.2, Grok 5, and their successors.

Conclusion

Grok 4.1 Fast marks an important step in the evolution of xAI’s ecosystem: a model tuned not simply for benchmark scores, but for the realities of agentic AI—fast, tool-rich, and tightly integrated with live data streams such as X.

Its leadership on agentic leaderboards underscores a broader industry trend: capability now depends as much on system design, tool orchestration, and hardware optimization as on raw model size. The upcoming releases of Grok 4.2 by Christmas and Grok 5 in early 2026 promise even deeper capabilities and will likely intensify competition across the AI landscape.

For scientists, engineers, and technologists, the key questions ahead are less about whether these systems will be powerful—they clearly will be—and more about how to ensure they are:

- Reliable enough for mission-critical workflows

- Aligned with human values and legal norms

- Transparent and evaluable in complex, dynamic environments

Grok 4.1 Fast is an early, influential example of what such systems can look like in practice. Watching how it is adopted, critiqued, and iterated will provide valuable insights into the future of agentic AI.

References / Sources

The following sources and references were consulted or are recommended for readers who want to explore related topics in more depth:

- xAI – Official Website and Grok Announcements

- Elon Musk on X – Updates on Grok Roadmap and xAI

- NextBigFuture – Coverage of Grok 4.1 Fast and AI Developments

- Arxiv: Toolformer and Related Work on Tool-Augmented Language Models

- Arxiv: Survey of Agentic LLMs and Autonomous AI Systems

- OpenAI Research – Papers on GPT-4.x, Agents, and Tool Use

- Anthropic Research – Claude 3.x and Constitutional AI

- Google DeepMind – Research on Large Models, Tool Use, and Safety

- Hugging Face Papers – Curated List of Recent LLM and Agentic AI Papers