29 Tesla Robotaxis in Austin: Inside the Quiet Revolution of Autonomous Ride-Hailing

In late 2025, independent trackers began cataloging Tesla “robotaxis” in major U.S. cities, noting at least 29 such vehicles operating in Austin and 97 in the San Francisco Bay Area. These cars, identifiable by unique license plates and distinctive sensor‑light configurations, hint at Tesla’s push toward a fully autonomous ride‑hailing network. While Tesla has not yet launched a public, fully driverless service comparable to Waymo or Cruise, these sightings offer valuable insight into deployment strategy, testing density, and how an AI‑first automaker is preparing for a post‑driver era.

This article dissects what the Tesla Robotaxi Tracker data tells us, clarifies what is known versus speculative, and places Tesla’s progress in the broader context of autonomous vehicles (AVs), urban mobility, and AI safety. It is written for readers who follow cutting‑edge transportation, AI engineering, and regulatory developments and want a structured, technically accurate overview without hype.

Mission Overview: What the Tesla Robotaxi Tracker Is Revealing

The “Tesla Robotaxi Tracker” referenced by NextBigFuture aggregates public sightings of specially identified Tesla vehicles. Enthusiasts and local observers log:

- License plates known or believed to be associated with Tesla’s internal robotaxi or ride‑hail test fleet.

- Locations and timestamps of sightings (e.g., Austin city center, South Congress, Silicon Valley corridors).

- Notable behaviors, such as repeated loops on specific streets, unusual parking patterns, or off‑hours testing.

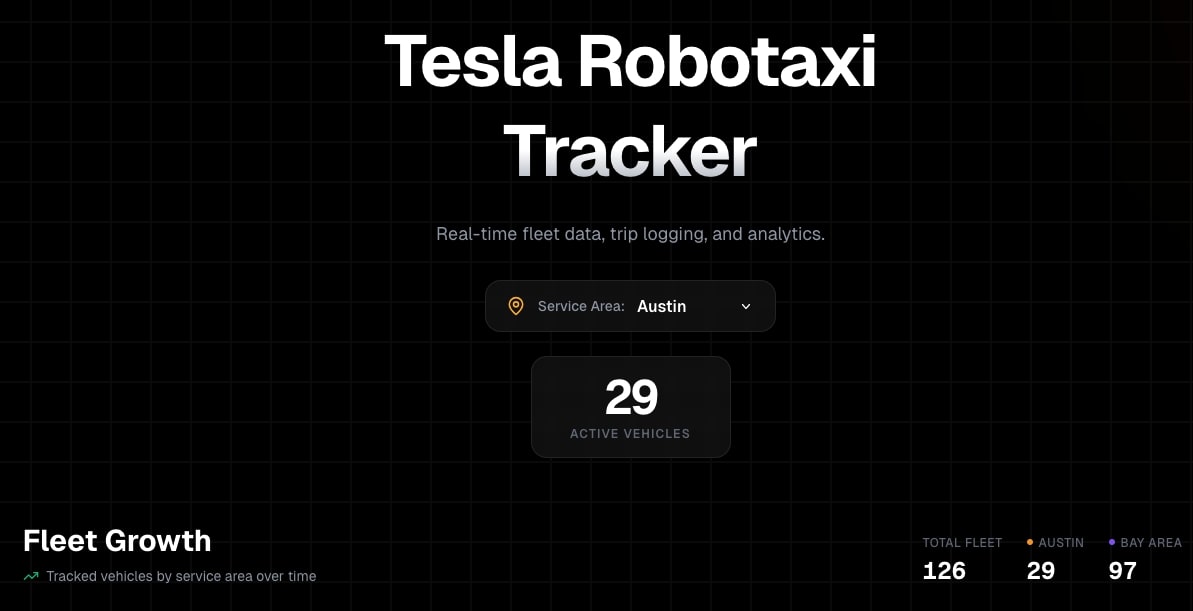

As of the latest snapshot, the tracker lists:

- 29 vehicles in Austin, Texas

- 97 vehicles in the San Francisco Bay Area

These numbers do not represent Tesla’s full global test fleet, but they do provide a rough proxy for how intensively Tesla is testing robotaxi‑oriented configurations in two strategically chosen markets: one in the heart of U.S. tech regulation and another in one of the country’s fastest‑growing “Sun Belt” tech hubs.

“We’re watching the early footprint of a system that, if it scales, could fundamentally change how cities think about roads, parking, and ownership.” — Brian Wang, futurist and founder of NextBigFuture

Why Austin and the Bay Area Matter

Tesla’s apparent concentration of robotaxi‑ready vehicles in Austin and the Bay Area is not random. It reflects a strategic blend of:

- Regulatory landscape – California, despite being strict, is the home base of many AV regulations and test programs. Texas, by contrast, is comparatively permissive and has actively courted autonomous vehicle deployments.

- Data richness – The Bay Area offers dense, complex traffic patterns and challenging road geometries. Austin combines rapidly growing traffic with a mix of urban freeways and residential zones.

- Corporate footprint – Tesla’s engineering and leadership presence in the Bay Area and its Gigafactory and corporate operations in Austin make both regions ideal for short feedback loops between on‑road testing and in‑house development.

From an AV engineering standpoint, diversity of driving environments is crucial. Training an end‑to‑end neural policy to handle both San Francisco’s hairpin streets and Austin’s wide arterials offers a robust test of generalization.

Technology: Inside Tesla’s Robotaxi Vision Stack

Unlike most AV competitors, Tesla has bet everything on a vision‑only stack—no lidar, limited radar, and a heavy reliance on high‑resolution cameras plus a powerful in‑car AI computer. Over time, Tesla’s robotaxi push has evolved around three main pillars:

1. End‑to‑End Neural Networks for Driving

Through its Full Self‑Driving (FSD) suite, Tesla has migrated from modular perception‑planning‑control pipelines to increasingly end‑to‑end neural networks that map sensor inputs directly to driving actions. This approach:

- Reduces hand‑written rules that can be brittle in edge cases.

- Uses vast fleet data to learn subtle driving cues (e.g., social behavior at unprotected left turns).

- Allows continuous iteration as new data comes from millions of cars.

2. Dojo and Massive Offline Training

Tesla’s Dojo supercomputer is designed to accelerate training of these large video‑based networks. While public details are limited, the intent is clear:

- Ingest multi‑camera video and associated driving telemetry from the fleet.

- Train networks on rare or highly complex events that human engineers manually tag or that triggers automatically detect (e.g., near‑misses).

- Deploy improved models over‑the‑air to vehicles, forming a virtuous data loop.

3. Robotaxi‑Oriented Hardware and Redundancy

For a true robotaxi, hardware must support:

- Compute redundancy – dual compute paths that can fail over safely.

- Power redundancy – backup circuits and robust power management.

- Remote operations – secure, low‑latency connections for remote monitoring and intervention when permitted by law.

“If you believe in the scaling hypothesis for AI, then the path to self-driving is not a question of if we can hard-code enough logic, but whether we can collect and train on enough multi-modal data.” — Andrej Karpathy, former Tesla Director of AI

Scientific and Societal Significance

The gradual appearance of Tesla robotaxi test vehicles is more than a product rollout—it is an ongoing urban intelligence experiment. Each car is both an agent and a sensor, contributing to a global dataset about human driving, traffic dynamics, and rare events that are statistically invisible in traditional transportation models.

Potential Safety Impact

Road crashes kill roughly 1.3 million people worldwide annually, with human error implicated in the majority of cases. Robotaxis promise:

- Consistent adherence to speed limits and following distances.

- Reduction in impaired, distracted, or fatigued driving.

- Rapid adoption of safety improvements via software updates.

However, the key scientific question is not whether AI can drive well on average, but whether it can reliably outperform humans across the fat tail of rare events—black ice, unpredictable pedestrians, or unusual infrastructure layouts.

Economic and Urban-Planning Impact

If Tesla or any other company reaches safe, scalable robotaxi deployment, cities could see:

- Reduced private car ownership in dense areas, shifting from CAPEX to OPEX (pay‑per‑ride).

- Repurposed parking lots and garages into housing or commercial use.

- New logistics patterns as autonomous vehicles handle late‑night deliveries and micro‑mobility tasks.

“The fundamental utility of a vehicle increases by around a factor of 5 if you can operate it nearly 24/7 in a robotaxi fleet.” — Elon Musk, Tesla CEO

Key Milestones on the Road to Tesla Robotaxis

Tesla’s robotaxi narrative has evolved through a series of public milestones and incremental technical achievements. While timelines have often slipped, the pace of software capability has clearly accelerated.

Development Timeline (Condensed)

- 2016–2019: First iterations of Autopilot and early FSD beta, with more limited functionality focused on highway driving.

- 2020–2023: Expansion of FSD Beta to hundreds of thousands of drivers; notable improvements in urban navigation and unprotected turns.

- 2023–2024: Larger neural networks, more end‑to‑end training, and visible reduction in driver interventions among power users.

- 2024–2025: Increasing sightings of robotaxi‑configured vehicles, especially in Austin and Bay Area; more internal fleet testing and partial regulatory filings in certain jurisdictions.

The tracker’s 29‑vehicle count in Austin and 97 in the Bay Area likely corresponds to internal milestones such as:

- Validation of new perception models and planning heuristics.

- Structured “route coverage” maps for future commercial service zones.

- Stress‑testing compute and thermal envelopes in real‑world duty cycles approaching a robotaxi’s expected 16–20 hours per day of operation.

Challenges: From Technical Edge Cases to Public Trust

Even as Tesla expands its robotaxi test footprint, several hard problems remain unsolved, both technically and institutionally.

1. Technical Edge Cases

- Occlusions and ambiguous signals – bicycles emerging from behind vans, partially obscured signage, or pedestrians signaling drivers non‑verbally.

- Adverse weather – heavy rain, fog, and glare can reduce camera reliability.

- Non‑standard infrastructure – poorly marked lanes, temporary constructions, and region‑specific rules.

Tesla’s reliance on cameras amplifies these challenges, as it cannot fall back to lidar’s precise depth measurements. The company counters this by scaling data and training, but real‑world robustness remains the true test.

2. Regulatory and Liability Questions

Before robotaxis operate without human drivers, regulators must be satisfied about:

- Minimum safety performance relative to human baselines.

- Data transparency after incidents, including black‑box logs.

- Clear liability frameworks for collisions and traffic violations.

California has already paused or restricted some AV operators over safety concerns, showing that regulators will act aggressively if the data suggests unacceptable risk.

3. Social Acceptance and Labor Impacts

Robotaxis have implications for:

- Professional drivers in taxi, ride‑hail, and delivery sectors.

- Public trust in AI systems sharing roads with pedestrians and cyclists.

- Insurance models that are currently built around individual human drivers.

“Technological capability is only part of the equation. The harder challenge may be aligning policy, public expectations, and economic transitions with the speed of AI deployment.” — Hypothetical commentary from a mobility policy researcher

Practical Tools for Following Tesla’s Robotaxi Journey

If you want to track Tesla’s robotaxi progress beyond raw headlines, a few resources are consistently useful:

- Independent trackers and mapping tools that log robotaxi‑style vehicles by plate and location.

- Regulatory filings with U.S. states like California and Texas, which often list testing permits and operational constraints.

- Technical talks and papers from Tesla’s AI Day events and from former Tesla AI leaders now in academia or startups.

For a deeper dive into the underlying AI techniques used by Tesla and other AV players, books like Deep Learning (Adaptive Computation and Machine Learning) offer a rigorous foundation in the neural network methods that make large‑scale autonomous driving possible.

Enthusiasts and professionals who want to experiment with on‑road data collection and driver‑assistance (in a research, non‑commercial context) often pair a Tesla or similar EV with high‑bandwidth drive recorders and compute. A common choice among practitioners is a fast external SSD such as the Samsung T7 Portable SSD 1TB , which can handle continuous dashcam and telemetry logging.

For ongoing commentary and breakdowns of Tesla’s autonomy progress:

- YouTube technical explainers focusing on FSD updates.

- LinkedIn analysis from autonomous vehicle engineers and product leaders.

- NextBigFuture for futurist‑oriented coverage tying Tesla’s robotaxis to broader trends in energy, AI, and space.

Conclusion: 29 Cars in Austin, Billions of Miles Ahead

The Tesla Robotaxi Tracker’s count of 29 vehicles in Austin and 97 in the Bay Area represents a minuscule fraction of global traffic—but a disproportionately important dataset for autonomy. Each mile they drive embodies a negotiation between deep learning, human‑designed safeguards, and the unpredictable complexity of city life.

Whether Tesla delivers on its most aggressive robotaxi timelines is still uncertain. What is clear is that:

- The company has committed more heavily than almost anyone else to a camera‑only, AI‑at‑scale approach.

- Key testbeds like Austin and the Bay Area will shape both the technical playbook and the regulatory narrative.

- Independent trackers and open analysis are essential checks on corporate claims, turning robotaxi sightings into a form of crowdsourced transparency.

Over the next few years, expect the numbers in those trackers to grow, the networks to improve, and the debates over safety, labor, and city design to intensify. For technologists and policymakers alike, following those 29 cars in Austin is not a curiosity—it is a preview of how AI may soon reshape everyday mobility.

Additional Context: How to Critically Evaluate Robotaxi Claims

If you are evaluating Tesla’s robotaxi trajectory—or that of any AV company—consider tracking a few quantitative indicators over time:

- Disengagement rate per 1,000 miles (where reported), distinguishing between safety‑driver interventions and software‑initiated safe stops.

- Operational Design Domain (ODD) – weather, time of day, and road types in which a service is allowed to operate driverless.

- Geographic scaling – number of cities or ZIP codes with meaningful coverage, not just pilot routes.

- Incident transparency – availability of post‑incident data and clear root‑cause analyses.

When those metrics move in the right direction simultaneously, the presence of “29 cars in Austin” will mean more than a novelty—it will signal that the robotaxi era is genuinely arriving, not just being forecast.

References / Sources

- NextBigFuture – Science and technology news and analysis

- Tesla – AI and Full Self‑Driving overview

- Andrej Karpathy – Selected AI and deep learning papers on arXiv

- NHTSA – Vehicle Automation and Safety Resources

- IEEE – Autonomous Vehicles Technology & Policy Coverage

- World Health Organization – Road Traffic Injuries Fact Sheet